Selecting the appropriate statistical test is crucial for deriving valid conclusions from data. This article outlines key principles, practical considerations, and step-by-step guidance to help researchers, analysts, and students identify the optimal test for their specific scenario.

Fundamentals of Test Selection

Understanding Data Types

Every statistical test is designed for particular types of data. Before choosing a test, categorize your variables as either categorical or continuous. Categorical variables (e.g., gender, treatment group) involve distinct categories, whereas continuous variables (e.g., weight, height) represent measurable quantities. Mixing up these types can lead to incorrect analysis and misleading results.

Defining the Research Question

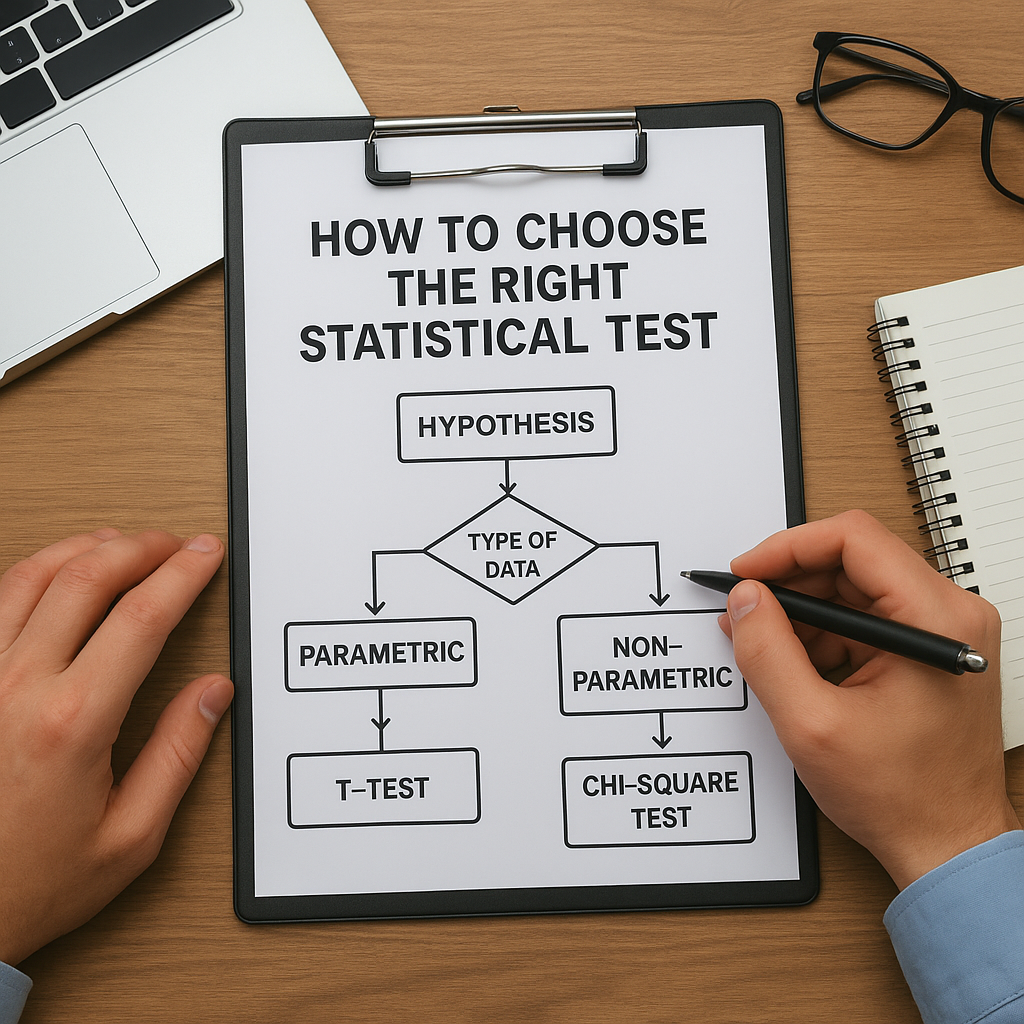

Begin by articulating a clear hypothesis. Are you comparing means, evaluating associations, or assessing differences in proportions? A well-defined hypothesis directs you toward either comparative tests (like t-tests or ANOVA) or associative tests (like correlation or chi-square).

Hypothesis Types

- One-sample tests compare sample metrics against a known value.

- Two-sample tests involve comparisons between two independent groups.

- Paired tests apply to related observations (e.g., pre- and post-treatment).

- Multiple group tests assess differences across three or more groups (e.g., ANOVA).

Key Considerations for Choosing Tests

Parametric versus Nonparametric

If your data meet certain assumptions—primarily normality and homogeneity of variance—you can use more powerful parametric tests (e.g., t-test, ANOVA). When assumptions are violated, switch to nonparametric alternatives such as the Mann–Whitney U test, Kruskal–Wallis test, or Wilcoxon signed-rank test.

Comparing Two Groups

- Independent samples: use independent-samples t-test or Mann–Whitney U.

- Related samples: use paired-samples t-test or Wilcoxon signed-rank.

- Proportions: use chi-square test or Fisher’s exact test for small sample sizes.

Multiple Group Comparisons

When comparing more than two groups, ANOVA is the parametric choice. If ANOVA assumptions fail, opt for Kruskal–Wallis. For repeated measures, consider repeated-measures ANOVA or Friedman test.

Assessing Relationships

To measure association strength between two continuous variables, Pearson’s correlation (parametric) or Spearman’s rho (nonparametric) is appropriate. Regression analysis extends this approach to model how one variable predicts another, estimating effect size and accounting for potential confounders.

Implementing and Interpreting Results

Checking Assumptions

Before running any statistical test, verify assumptions:

- Normality: Use graphical methods (e.g., Q-Q plots) and tests (e.g., Shapiro–Wilk).

- Homoscedasticity: Assess constant variance across groups via Levene’s test.

- Independence of observations: ensure no hidden dependencies.

If violations occur, transformation (e.g., log, square root) or nonparametric methods often rescue the analysis.

Calculating Significance

Statistical significance is determined by a p-value. Compare the p-value to your alpha level (commonly 0.05). If p ≤ alpha, reject the null hypothesis. However, significance alone does not quantify practical importance; always report effect size measures (e.g., Cohen’s d, eta squared).

Confidence Intervals

Complement p-values with confidence intervals to indicate the range of plausible parameter values. A 95% confidence interval that does not include the null value provides additional support for a significant finding.

Practical Tips for Robust Analysis

Software and Implementation

Popular statistical software (e.g., R, Python with SciPy and statsmodels, SPSS, SAS) provides built-in functions for most tests. For example, in R:

- t.test() for t-tests

- wilcox.test() for Wilcoxon tests

- aov() for ANOVA

- cor.test() for correlations

Always double-check input parameters and output interpretation guidelines.

Reporting Standards

Follow established reporting guidelines (e.g., APA, CONSORT). Include:

- Test statistic value (t, F, χ², etc.)

- Degrees of freedom

- Exact p-value

- Effect size estimate

- Confidence intervals

Common Pitfalls

- Overlooking assumption checks.

- Conducting multiple tests without correction (use Bonferroni or False Discovery Rate).

- Misinterpreting non-significant results as evidence of no effect.

- Neglecting data cleaning and outlier detection.

Final Recommendations

Careful test selection hinges on understanding your data, verifying assumptions, and interpreting results within context. Combining statistical rigor with transparent reporting ensures that conclusions drawn from your analyses are both credible and impactful. Continuous learning and practice will sharpen your ability to choose the most suitable statistical tests for diverse research scenarios.