Randomized controlled trials (RCTs) stand at the forefront of evidence-based decision making in medicine, public health, and social sciences. By carefully assigning participants to different interventions and rigorously measuring outcomes, RCTs aim to isolate the true effect of a treatment or policy. This article delves into the core principles, practical design, statistical analysis, and ethical concerns surrounding RCTs. We will explore how proper randomization, meticulous allocation concealment, and systematic safeguards against bias and confounding can yield robust conclusions that drive clinical guidelines and policy reforms.

Fundamental Principles of Randomized Controlled Trials

Randomization and Allocation

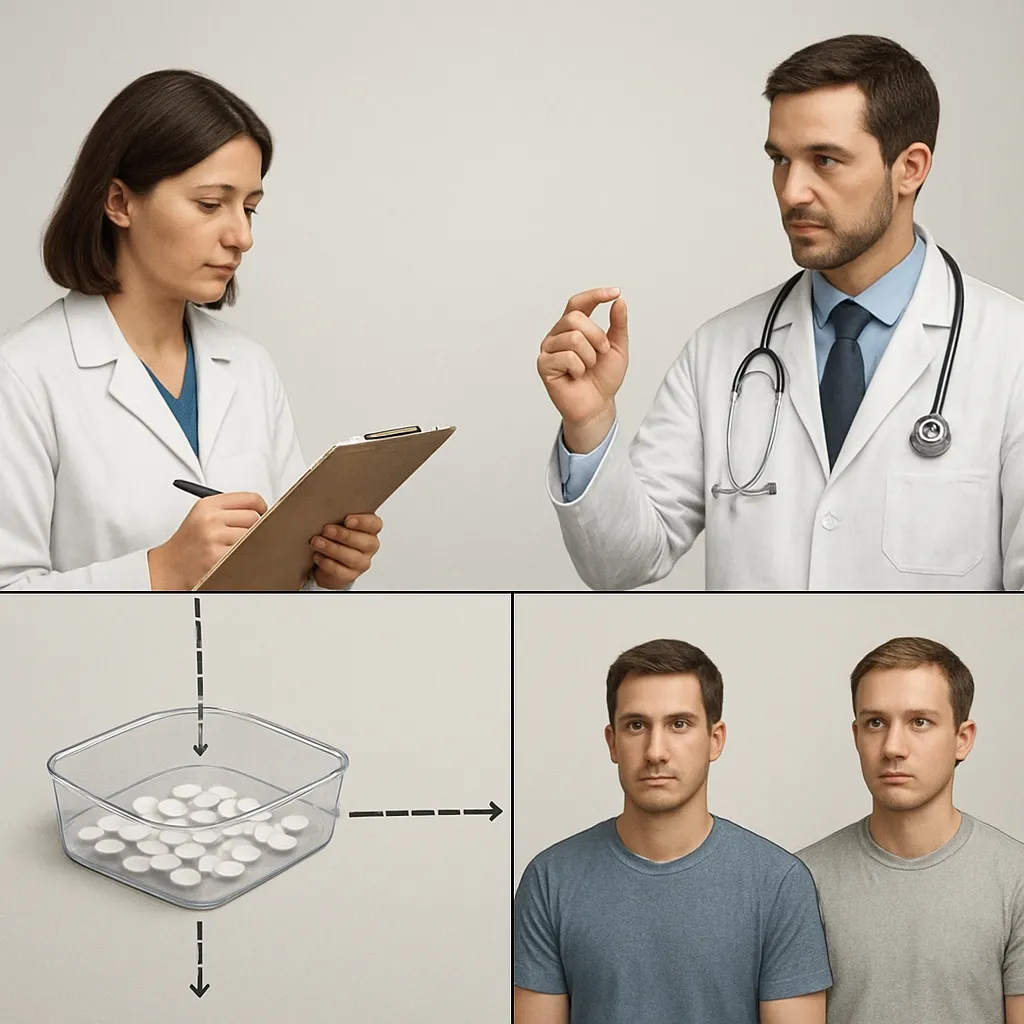

At the heart of any RCT lies the process of randomly assigning participants to intervention or comparison groups. Proper randomization ensures that both known and unknown confounders are evenly distributed across arms, minimizing systematic differences. Techniques such as computer-generated random sequences, random permuted blocks, or stratified schemes serve to enhance the unpredictability of assignment. Equally crucial is allocation concealment, which prevents trial personnel from influencing enrollment based on upcoming group placement. Sealed opaque envelopes, centralized telephone services, or electronic systems are common strategies to guard the assignment process against manipulation.

Control and Comparison Groups

A valid RCT requires a reliable benchmark to evaluate the experimental intervention. A control group might receive a placebo, standard therapy, or no intervention, depending on ethical and practical considerations. Placebo-controlled designs are common in pharmacological research, where blinding participants to inactive treatment helps isolate the specific effect of an active agent. In behavioral or educational trials, “usual care” or alternative program controls may serve as comparators. Choosing an appropriate control minimizes threats to internal validity and ensures meaningful interpretation of differences in outcomes.

Blinding and Minimizing Confounding

Blinding methods—single, double, or even triple—shield both participants and investigators from knowing which treatment each subject receives. Effective blinding reduces the risk of differential behavior and assessment based on preconceived notions about efficacy. Without blinding, placebo effects, observer-expectancy bias, and differential co-interventions can skew results. Alongside blinding, pretrial steps such as rigorous eligibility screening and baseline stratification help mitigate confounding influences, enhancing the trial’s ability to attribute observed differences to the intervention itself.

Design and Implementation

Sample Size Determination and Statistical Power

Determining the appropriate sample size is a balancing act between feasibility and the ability to detect clinically meaningful effects. The concept of statistical power refers to the probability of correctly rejecting a false null hypothesis. Underpowered trials risk missing true effects, while excessively large ones may detect trivial differences that lack practical relevance. Investigators must specify expected effect size, Type I error rate (α), and desired power (commonly 80% or 90%) when calculating participant numbers. Real-world constraints such as recruitment rates, budget, and duration further shape these calculations.

Outcome Measures and Participant Selection

Clear, objective, and reliable outcome measures are essential for drawing valid conclusions. Primary outcomes should directly reflect the intervention’s goals and be measured using validated instruments or clinical endpoints. Secondary and exploratory outcomes can provide additional context but should be outlined in advance to avoid post hoc data dredging. Eligibility criteria—covering demographics, disease severity, co-morbidities, and prior treatments—define the trial population. While broader inclusion enhances generalizability, narrow criteria may reduce variability and improve internal consistency.

Conducting the Trial and Ensuring Validity

Operational integrity is vital for preserving trial rigor. Standardized protocols, thorough staff training, and consistent data collection procedures guard against protocol deviations. Regular monitoring visits and data audits help identify and rectify inconsistencies. Maintaining high follow-up rates prevents attrition bias; strategies include frequent reminders, flexible scheduling, and participant engagement efforts. These measures collectively uphold the validity of inferences drawn from the trial.

Statistical Analysis and Interpretation

Hypothesis Testing and p-Values

Statistical hypothesis testing forms the backbone of RCT analysis. Investigators state a null hypothesis (no difference between treatments) and an alternative hypothesis (a difference exists). The p-value quantifies the probability of observing data as extreme as—or more so than—the actual results, assuming the null hypothesis is true. A p-value below the predetermined threshold (often 0.05) suggests statistical significance. However, p-values do not convey effect size or clinical importance; confidence intervals and measures such as number needed to treat (NNT) provide complementary insights.

Handling Missing Data and Intention-to-Treat

Missing data can introduce serious bias if not addressed properly. The intention-to-treat (ITT) principle mandates analyzing participants according to their original assignments, regardless of adherence. ITT preserves the benefits of randomization and provides conservative estimates of effectiveness. When data are missing, techniques like multiple imputation, last observation carried forward (LOCF), or mixed-effects models help mitigate bias. Transparent reporting of missing data patterns and sensitivity analyses bolster the credibility of findings.

Ethical and Regulatory Considerations

Informed Consent and Participant Protection

Adherence to ethical ethics standards safeguards participants’ rights and welfare. Prospective trial subjects must receive clear information on potential risks, benefits, procedures, and alternatives before providing voluntary consent. Institutional review boards (IRBs) or ethics committees review study protocols to ensure ethical compliance. Ongoing safety monitoring through data safety monitoring boards (DSMBs) can recommend early termination if harm emerges or overwhelming benefit is observed.

Trial Registration, Transparency, and Replication

To promote openness and prevent selective reporting, RCTs should be registered in public databases prior to enrollment. Prospective registration of objectives, methods, and outcomes fosters accountability. Sharing de-identified datasets and analysis code furthers scientific collaboration and allows independent verification of results. Robust findings often invite follow-up studies and replication, which test reproducibility across different settings or populations, thereby reinforcing confidence in the evidence base.