Outliers represent those curious observations that deviate markedly from the rest of a dataset. Their presence can either signal measurement errors, rare phenomena or fresh insights waiting to be uncovered. Properly handling these data points is essential to preserve the integrity and reliability of any statistical analysis.

Understanding the Nature of Outliers

Definition and Context

An outlier is an observation that lies an abnormal distance from other values in a random sample from a population. While some outliers result from genuine variation in the data-generating process, others stem from errors in recording, data entry or instrumentation. Recognizing the distinction between these scenarios is crucial for drawing valid conclusions.

Sources of Outliers

- Measurement or recording errors, such as typos or sensor malfunctions.

- Natural variation in rare events, like extreme weather measurements or financial crashes.

- Data integration issues when combining datasets from multiple sources, affecting data quality.

- Intentional or unintentional manipulation, for example, fraudulent transactions inflating values.

Detecting and Visualizing Outliers

Before deciding on treatment, analysts must reliably identify outliers. Various methods exist to flag these anomalies:

- Boxplots: Visualizing quartiles and the interquartile range (IQR), with points beyond 1.5×IQR considered potential outliers.

- Z-score method: Identifying observations with a standardized score beyond a chosen threshold, often ±3.

- Density-based techniques like DBSCAN, which cluster dense regions and treat sparsely populated points as anomalies.

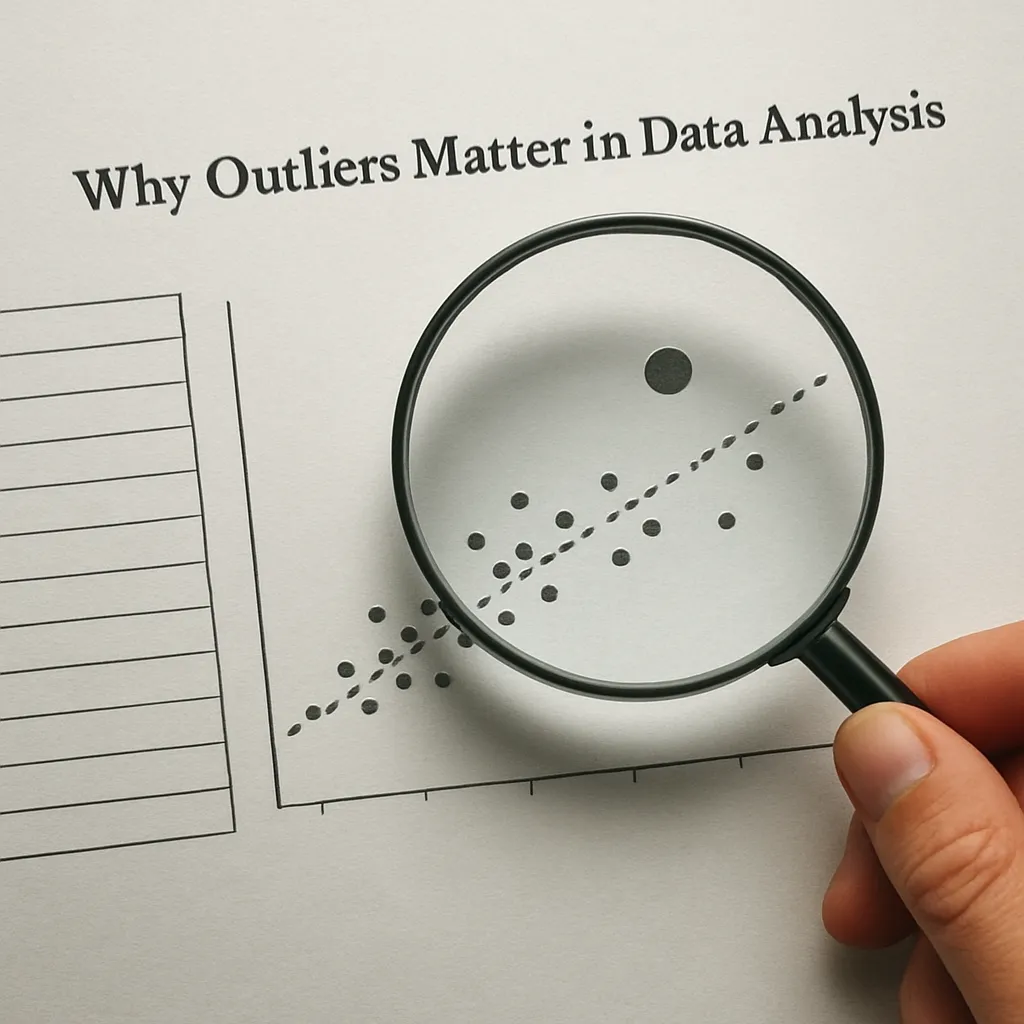

- Visualization tools such as scatter plots, Q–Q plots, and heatmaps that reveal distributional irregularities.

Influence on Descriptive Statistics

Outliers can dramatically skew basic summaries:

- The mean is highly sensitive, shifting toward extreme values and misrepresenting central tendency.

- Variance and standard deviation inflate when outliers lie far from the mean, exaggerating dispersion.

- Percentile-based measures like the median and interquartile range remain more robust in the presence of extremes.

Impact on Inferential Techniques

Inferential methods assume certain data characteristics, and outliers may violate these assumptions:

- Regression analysis: A single outlier can exert undue leverage, distorting slope estimates and undermining model validity.

- Hypothesis testing: Outliers inflate test statistics, increasing the risk of Type I or Type II errors.

- ANOVA and other variance-based tests: Inflated variance reduces statistical power, masking real effects.

- Machine-learning algorithms: k-nearest neighbors and clustering rely on distance metrics, where outliers skew influence on neighboring points.

Robust Methods and Mitigation Strategies

To avoid misleading results, analysts employ various strategies tailored to the nature and source of outliers:

Data Cleaning and Transformation

- Imputation: Replacing anomalous values with estimated ones based on neighborhood or model-based techniques.

- Winsorizing: Limiting extreme values to a specified percentile, reducing impact on summary statistics.

- Logarithmic or Box–Cox transformations: Compressing scale differences to bring outliers closer to the bulk distribution.

Robust Statistical Techniques

- M-estimators: Generalizations of maximum-likelihood that downweight the influence of outliers.

- Trimmed means: Excluding a fixed proportion of the highest and lowest values before calculating averages.

- Quantile regression: Modeling conditional medians instead of means, offering resilience to extreme observations.

- Bootstrap methods: Resampling procedures that assess the stability of estimates under various subsets of the data.

Advanced Considerations

In complex scenarios, outliers may suggest deeper phenomena rather than mere noise. Understanding their role can lead to significant breakthroughs:

- Rare event modeling in finance or insurance often focuses on tail behavior, requiring specialized distributions such as the generalized Pareto.

- Time-series outliers may indicate change points, prompting regime-switching models or intervention analysis.

- High-dimensional data challenges detection: distance metrics become less informative, necessitating techniques like robust principal component analysis.

- In predictive analytics, monitoring incoming data streams for emerging outliers can trigger real-time anomaly alerts, safeguarding system reliability.

Practical Guidelines for Analysts

Effective handling of outliers combines domain knowledge with statistical rigor:

- Start with an exploratory analysis: visualize distributions and summary statistics before modeling.

- Document every decision: flagging, transforming or excluding outliers must be reproducible and transparent.

- Assess the sensitivity of results: compare inferences with and without suspect points to gauge impact.

- Balance automation with expert review: while algorithms can flag anomalies, human judgment ensures contextually appropriate treatment.