In data-driven research, analysts frequently encounter patterns that seem significant yet prove deceptive upon closer examination. Identifying these illusory associations is crucial for sound decision-making and rigorous analysis. By understanding the mechanisms behind correlation illusions, recognizing common pitfalls, and applying robust diagnostic tools, statisticians can uncover the true relationships hidden in their data.

Understanding the Nature of Correlation Illusions

Correlation measures the degree to which two variables move together, but it does not guarantee that one causes the other. Even a high Pearson or Spearman coefficient can be misleading if driven by random chance or unaccounted factors. A classic example involves the number of films Nicolas Cage appears in and the incidence of swimming pool drownings—an absurd link that highlights how spurious patterns can arise from purely coincidental trends.

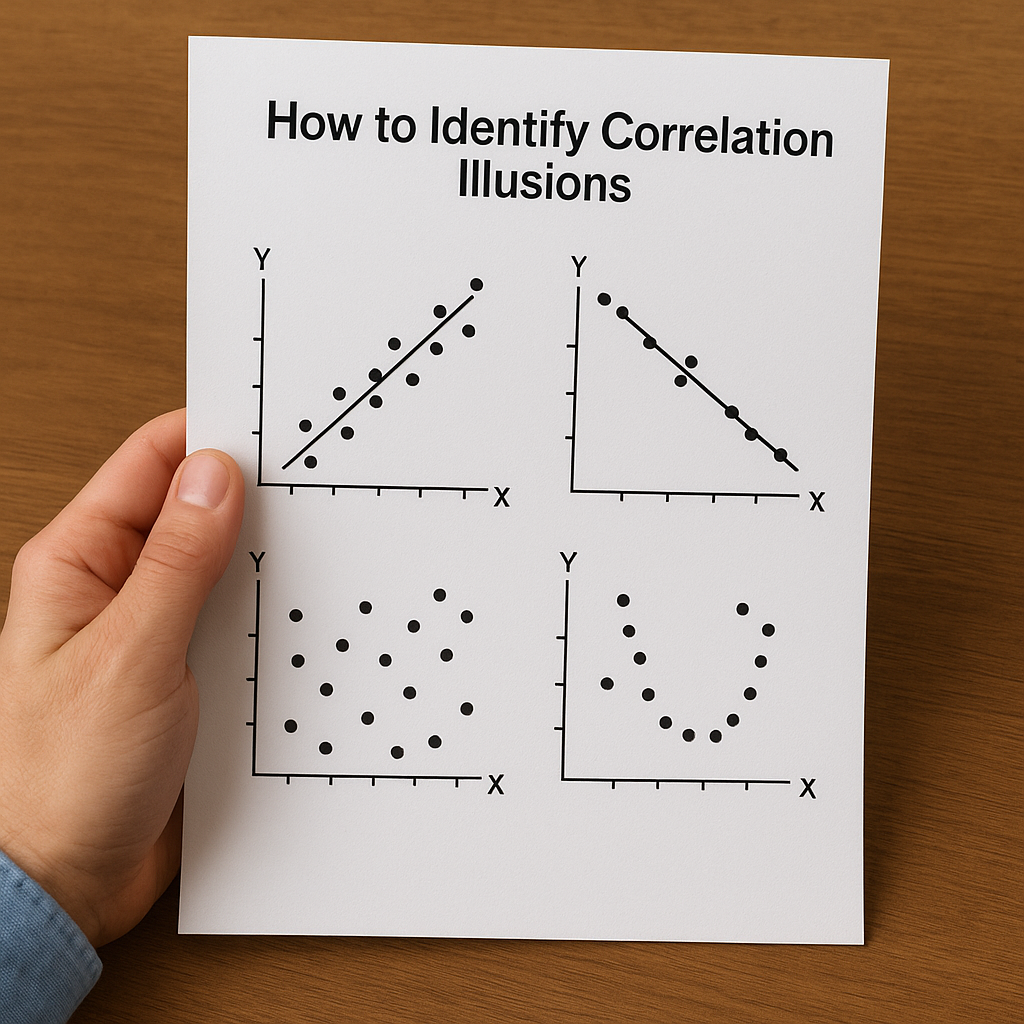

At its core, a correlation illusion emerges when analysts mistake parallel fluctuations for genuine connections. This misinterpretation often stems from overlooking underlying data structure or failing to define a clear causal framework. To navigate these complexities, one must first differentiate between mere association and true causation.

Key Concepts

- Spurious correlations: Statistical associations without causal basis, often due to random alignment.

- Confounding variables: Hidden drivers that influence both variables of interest, generating a false impression of direct impact.

- Simpson’s paradox: When aggregated data reverses the direction of trends observed within subgroups.

- Outliers: Extreme observations that can disproportionately affect correlation estimates if not managed appropriately.

- Reverse causation: A scenario where the presumed outcome is actually the driver of the supposed cause.

Common Sources of Misleading Correlation

Avoiding correlation illusions begins with recognizing how they arise. The following are the most prevalent sources of misleading associations:

- Sampling bias: If your sample does not represent the broader population, any observed relationships may not generalize. For example, surveying only premium customers could inflate satisfaction metrics compared to a mass-market audience.

- Data dredging (p-hacking): Actively searching for significant correlations among hundreds of variables inevitably yields some that appear statistically valid purely by chance.

- Temporal coincidences: Variables that both follow seasonal or trend cycles—such as retail sales and flu cases—can correlate even when no direct link exists.

- Mathematical coupling: When one variable is partially computed from another, correlation is artificially boosted. An index constructed using two measures will naturally correlate with each component.

- Publication bias: Journals tend to feature striking correlations, encouraging researchers to highlight novel but potentially misleading findings.

In each case, the apparent relationship masks deeper dynamics. Disentangling these effects demands a combination of statistical rigor and domain expertise.

Techniques to Uncover Spurious Relationships

Safeguarding against correlation illusions requires a toolbox of analytical methods designed to test the validity of observed links:

- Stratification: Segment data by suspected confounders (age, income, geography) and examine if correlations remain consistent across subgroups.

- Partial correlation: Calculate the association between two variables while statistically controlling for a third. This isolates direct relationships.

- Regression diagnostics: Analyze residuals from a fitted model to detect patterns unexplained by the primary predictors. Non-random residual trends hint at missing variables.

- Sensitivity analysis: Vary modeling assumptions (e.g., inclusion of covariates or missing data techniques) and observe whether results persist.

- Instrumental variables: Use instruments—variables related to the suspected cause but not directly to the effect—to tease out genuine causal impact.

Building a Causal Framework

Implementing a causal approach begins with constructing a theoretical model, often visualized through directed acyclic graphs (DAGs). By mapping out all potential paths linking variables, researchers can identify and block confounding routes. Randomized controlled trials (RCTs) remain the gold standard, but in observational settings, carefully chosen instruments and longitudinal designs can approximate causal inference.

Furthermore, adopting cross-validation techniques ensures that any predictive relationships generalize beyond the original sample. Splitting data into training and testing sets guards against overfitting and reveals whether associations hold under fresh observations.

Practical Examples and Case Studies

Examining real-world scenarios illuminates how subtle biases can produce strikingly erroneous conclusions.

Case Study 1: Hospital Readmission Rates

Initial data showed hospitals with higher nurse staffing had increased readmission rates, suggesting that more staff worsened outcomes. However, when patient acuity scores were incorporated, the relationship vanished. Hospitals treating more critical cases naturally require more nurses and also experience higher readmission, revealing the initial link as purely confounded.

Case Study 2: Technology Adoption and Productivity

A company observed that departments purchasing advanced software reported productivity jumps. Managers touted the software’s efficacy, but a detailed analysis uncovered that teams with forward-thinking cultures adopted new tools first. After controlling for team motivation and skill levels, software adoption lost its statistical impact.

Case Study 3: Economic Indicators and Market Returns

Some analysts point to consumer confidence indices as predictors of stock market performance. While short-term correlations exist, back-testing reveals that global economic cycles and interest rates are the true drivers, with confidence merely co-moving. Instrumental variable techniques confirmed that altering interest rate expectations explained more variance in returns than confidence scores.

Best Practices for Robust Analysis

To minimize the risk of falling prey to correlation illusions, adhere to these guidelines:

- Pre-register hypotheses and analysis protocols to curb p-hacking and selective reporting.

- Include all relevant covariates from the outset, informed by domain knowledge, to reduce omitted variable bias.

- Conduct power analysis prior to data collection, ensuring sufficient sample size to detect meaningful effects.

- Apply cross-validation and out-of-sample testing for predictive reliability beyond the training data.

- Visualize data extensively—scatterplots, heatmaps, and residual plots often reveal patterns invisible to summary statistics.

- Maintain a skeptical mindset: treat high correlations, especially in large data sets, as starting points for further inquiry rather than proof of effect.

By integrating methodological rigor with theoretical insights, analysts can distinguish between authentic relationships and those that merely mimic significance. Vigilance in design, execution, and interpretation is the key to upholding the integrity of statistical conclusions.